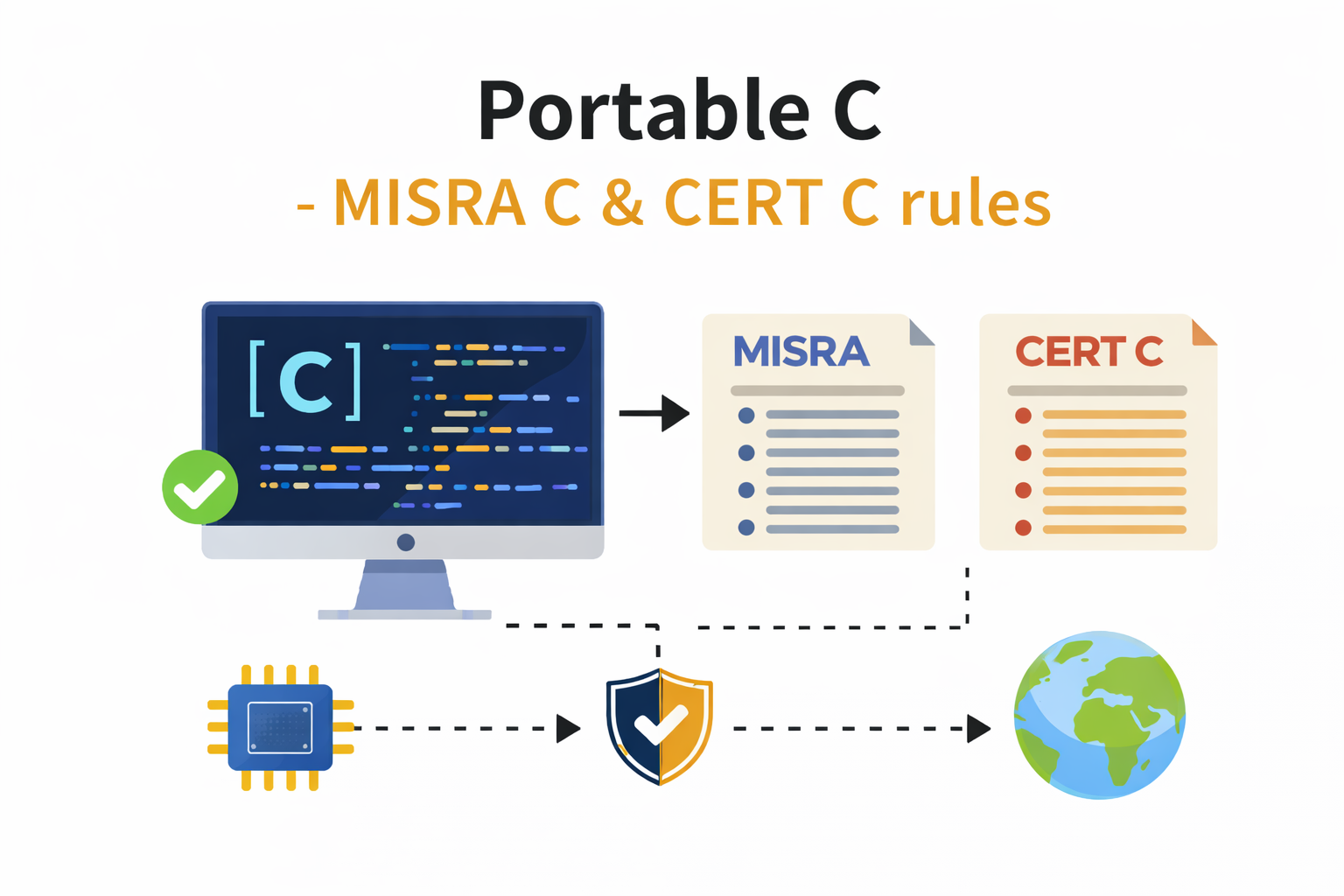

Portable and Secure C- A Practical Guide to MISRA C & CERT C

The C language has powered satellites, pacemakers, and aircraft flight computers for five decades. That longevity is not accidental, and neither is the discipline required to keep it safe.

- Why portability matters, and why it’s harder than you think

- The great myth of “Embedded C vs C”

- Who sets the guidelines, and by what authority

- MISRA C and CERT C: why two standards when we want both?

- Industry values and testing for security and portability

- Testing strategies that complement static analysis

- Failures, liabilities, and the price of non-compliance

- The future of C: C23, new ISO directions, and the long game

- Rust as a reference point

Why portability matters, and why it’s harder than you think

Ask a firmware engineer what portability means and you’ll likely hear something about target architectures: ARM Cortex-M versus RISC-V versus MIPS. Swap the compiler flags, rewrite the linker script, done. That’s migration, not portability.

Real portability is subtler and far more dangerous. It lives in the gaps between what the C standard defines, what your compiler assumes, and what your hardware silently does. It hides in a line like:

int x = 65536;

short s = (short)x; /* Implementation-defined. Could be 0. Could be -1. Could be anything. */

On your 32-bit x86 development machine with GCC, that cast might produce a predictable result. On a target with a different ABI, a different INT_MAX, or a compiler that treats overflow as a licence to optimise away your entire conditional, the result is anything but.

“Portability is not a feature you bolt on at the end. It’s a constraint you design to from the start, or you pay for it with bugs that only manifest in the field.”

The cost of getting this wrong is measured in recalls, patch cycles, and in safety-critical domains, human lives. The 1996 Ariane 5 Flight 501 disaster, caused by a 64-bit floating-point value being converted to a 16-bit integer without range checking, destroyed a 500-million-dollar rocket 37 seconds after launch. The code had been correct for Ariane 4. It was portable only by accident.

Portability matters because C is the language of constrained targets, and constrained targets are everywhere: automotive ECUs, industrial PLCs, medical implants, avionics, SCADA systems. The code you write today will outlive the compiler you used to build it. The board it runs on today may be replaced by something with a different word size tomorrow. Designing for portability isn’t an academic exercise. It’s professional hygiene.

The great myth of “Embedded C vs C”

There is a persistent belief in firmware circles that “Embedded C” is a separate dialect: a hardened, stripped-down version of the language with special rules for registers, memory-mapped I/O, and interrupt handlers. Developers treat it as an excuse. “Of course I’m using implementation-defined behaviour — this is embedded code.”

This is fiction. There is no Embedded C standard separate from C89, C99, C11, or C23. There are only C implementations targeting constrained environments, and all of them are still governed by ISO/IEC 9899.

The misconception

The myth likely originated from the fact that bare-metal code frequently uses genuinely non-portable constructs like the

- inline assembly (code enclosed with #asm … #endasm)

volatile-mapped peripheralsinterrupt attribute macros

which does feel “different” from desktop C. They are. But they’re non-portable not because they’re “embedded”; they’re non-portable because they’re implementation extensions. The C standard has always accounted for this through its notion of undefined and implementation-defined behaviour. Recognising the boundary between standard C and your vendor’s extensions is what separates professional firmware from code that breaks mysteriously when you switch toolchains.

The practical consequence of the myth is that it discourages engineers from applying rigorous coding standards. MISRA C was written specifically for embedded and safety-critical C. Every rule in MISRA C 2023 has been crafted with resource-constrained, compiler-diverse, long-lived firmware in mind.

The myth also conflates two distinct problems. Platform-specific code the __attribute__((interrupt)), the DMA register layout, the linker section pragma belongs in a hardware abstraction layer (HAL), clearly bounded and isolated. walled off from everything else. But the features like:

- The business logic

- The protocol stack

- The sensor driver

that code should be portable, standard C.

Calling everything “Embedded C” and shrugging at portability issues is how you end up with 40,000-line monolithic HAL files that might take months to port to a new SoC generation.

Who sets the guidelines, and by what authority

The landscape of C coding standards is fragmented in a way that reflects the industries that depend on C most heavily. Understanding who writes the rules and why their authority matters is essential before you can meaningfully apply them.

ISO / IEC JTC1 SC22 WG14

The C language itself is standardised by ISO Working Group 14. Their output - C89, C99, C11, C17, and now C23, is the normative foundation. Every compiler, every coding standard, every static analysis tool references this document. WG14 doesn’t write portability guidelines for embedded systems; they define what the language is.

MISRA

The Motor Industry Software Reliability Association began as a UK automotive consortium in the early 1990s. Their first C guidelines (1998) were explicitly aimed at making C safer for vehicular embedded systems. MISRA C is now on its fourth major edition (2023) and has been adopted far beyond automotive: aerospace (DO-178C contexts), medical devices, railway (EN 50128), and industrial control. MISRA’s the standard is a purchased document, which shapes how it propagates through supply chains (usually mandated by OEMs on their Tier 1 and Tier 2 suppliers).

CERT / Carnegie Mellon SEI

The CERT Coordination Center at Carnegie Mellon’s Software Engineering Institute took a different approach. Their CERT C Coding Standard (now in its third edition, aligned to C11) emerged from secure software research and is publicly available. CERT’s perspective is fundamentally security-first: the rules exist to eliminate classes of vulnerability — buffer overflows, integer wrapping exploits, race conditions — rather than to satisfy automotive functional safety requirements.

IEC, AUTOSAR, DO-178C

Domain-specific standards layer on top of or reference MISRA and CERT. IEC 61508 (functional safety) mandates the use of appropriate coding standards; AUTOSAR’s C++ guidelines borrow heavily from MISRA philosophy; DO-178C for airborne software requires “coding standards” without specifying which — in practice, MISRA C is the industry answer.

| Body | Standard | Focus | Access | Primary Domain |

|---|---|---|---|---|

| ISO WG14 | ISO/IEC 9899 | Language definition | Purchased | Universal |

| MISRA | MISRA C 2023 | Safety + portability | Purchased | Automotive, safety-critical |

| CERT SEI | CERT C (C11) | Security + correctness | Free / online | Systems, secure software |

| IEC | IEC 61508 | Functional safety process | Purchased | Industrial, all domains |

MISRA C and CERT C: why two standards when we want both?

This is the question every team asks when they first encounter the landscape. Both standards exist to make C safer and more correct. Both prohibit dangerous constructs. Both reference the same underlying C standard. So why are there two, and which one should you use?

The answer reveals something important about the nature of “safe code” itself: the threat model changes depending on what you’re protecting against.

MISRA C 2023 (Safety-by-design)

- Rooted in functional safety - IEC 61508, ISO 26262

- Focuses on determinism, defined behaviour, and portability across toolchains

- Rule-based with mandatory, required, and advisory tiers

- Mandates use of

stdint.hfixed-width types - Prohibits dynamic memory allocation entirely (Rule 21.3)

- Restricts recursion (Rule 17.2) - stack depth must be bounded

- Designed for compliance auditing and tool certification

- Deviation process: formal documented process for justified departures

CERT C Coding Standard (Security-by-default)

- Rooted in secure software research - CVE patterns, CWE taxonomy (CWE - Common Vulnerabilities and Exposures)

- Focuses on eliminating exploitable vulnerability classes

- Recommendation-based with risk severity (L1-L3) ratings

- Prioritises input validation and boundary checking

- Addresses concurrency and signal safety (POSIX context)

- More permissive on dynamic memory, but with strict rules for its use

- Free to use, openly published, constantly updated

- Maps to CWE identifiers for traceability to vulnerability databases

The key distinction: MISRA asks

“will this code behave correctly on the target?”

while CERT asks

“can an adversary abuse this code?”

These are related but not the same question. A MISRA-compliant pacemaker firmware is deterministic and portable, but if it has a buffer overread in a BLE command parser, CERT would flag it as exploitable.

A CERT-hardened connected gateway might handle all attack surfaces correctly but contain integer types that invoke implementation-defined behaviour on a 16-bit MCU, which MISRA would prohibit.

The pragmatic answer

Use both, layered.

MISRA C as the portability and safety baseline, especially for any component that must pass a functional safety assessment.

CERT C as the security review lens, especially for any component that handles external inputs:

- network packets

- file parsing

- command interfaces.

Where the two overlap, you get duplicate safeguards for extra coverage. In case, where they diverge, document your risk decision explicitly.

Teams building under IEC 62443 (industrial security) or UNECE WP.29 (automotive cybersecurity regulation) increasingly need to demonstrate compliance with both families simultaneously. The tools have caught up: modern static analysers like

- PC-lint Plus

- Polyspace

- Helix QAC

- CodeSonar

support simultaneous MISRA and CERT rule sets with unified dashboards.

Industry values and testing for security and portability

Knowing the standards is necessary but not sufficient. The real question is how engineering organisations internalise them and how they verify compliance in a way that is meaningful rather than performative.

The compliance theatre problem

A deviation log is a formal record of every place where your code intentionally violates a rule from a coding standard (like MISRA C or CERT C), along with a justification for why that violation is acceptable.

It’s not a workaround, it’s actually part of the official compliance process. If your deviation log is longer than your coding standard compliance summary, something has gone wrong. Deviations should be documented with specific justification, and reviewed

This pattern is very common in the Embedded Industry, a project adopts MISRA, runs a static analyser at the end of development, gets 1000s of violations, and proceeds to suppress them all with blanket deviation justifications before the audit. The code ships. The certificate is granted. But it doesn’t changes the risk profile.

Testing strategies that complement static analysis

Static analysis finds structural violations. It doesn’t find all runtime behaviour. A few approaches that work well alongside it:

Mutation testing verifies that your test suite actually detects behavioural changes. A test that doesn’t kill a mutant isn’t testing anything. Tools like mull (LLVM-based) work with embedded cross-compilation pipelines.

Sanitisers on host-side unit tests - ASAN, UBSAN, and MSAN on your portable logic layer catch undefined behaviour at runtime, before the hardware ever sees the code.

UBSAN is especially useful for the integer overflow and type aliasing issues that MISRA rules are designed to prevent.

Fuzz testing command interfaces - any code that parses external input (AT commands, CAN frames, Modbus PDUs, BLE characteristics) should be fuzz-tested.

LibFuzzer and AFL++ can be integrated against your protocol layer running on a host simulator.

**Architecture First Approach **

Traditional embedded V-model development defers security and portability testing to verification phases.

By that point, architectural decisions that create portability problems are already baked in. Make type choices, integer promotion decisions, and platform assumptions part of the design review, not the code review.

Failures, liabilities, and the price of non-compliance

The standards community presents MISRA and CERT as good professional practice. Industry regulators increasingly present them as legal instruments. Understanding both framings changes how seriously engineers and management take them.

Ariane 5, Flight 501 (1996)

The inertial reference system reused from Ariane 4 attempted to convert a 64-bit floating-point velocity value to a 16-bit signed integer. The value was outside the representable range. The conversion triggered a hardware exception. The backup SRI failed identically. The rocket self-destructed 37 seconds after launch.

Root cause: Undefined behaviour on integer conversion. MISRA Rule 10.3 — a value must be within the range of the target type — would have flagged this at static analysis time. Cost: approximately $370M USD.

Therac-25 Radiation Therapy Device (1985–1987)

Race conditions in the control software allowed a high-power beam to fire without the physical safety shield in place. At least six patients received massive radiation overdoses. Three died.

Source and Read Further - Killer Bug. Therac-25: Quick-and-Dirty

Root cause: Shared mutable state accessed without synchronisation. CERT CON32-C (prevent data races when accessing bit-fields from multiple threads) directly addresses this class of vulnerability. No software coding standard was in use.

Boeing 737 MAX MCAS (2018–2019)

The Maneuvering Characteristics Augmentation System relied on input from a single angle-of-attack sensor. Insufficient validation of sensor inputs and a lack of defensive coding around single-point-of-failure data paths contributed to two crashes killing 346 people.

Root cause: Insufficient input validation and defensive programming. CERT input validation guidelines apply directly. Boeing paid over $2.5 billion in settlements and penalties. The FAA and EASA have since tightened software assurance requirements for aviation.

The regulatory shift

Since 2020, the regulatory environment for software-intensive embedded systems has shifted measurably. UNECE WP.29 requires automotive OEMs to demonstrate cybersecurity management processes, including coding standards. The EU Cyber Resilience Act (2024) extends software security requirements to all connected products sold in Europe. FDA guidance on medical device software explicitly references IEC 62443 and CERT-aligned practices. Source - https://digital-strategy.ec.europa.eu/en/policies/cyber-resilience-act

In regulated markets, shipping without demonstrable adherence to appropriate coding standards is a compliance exposure. Engineering teams that treat MISRA and CERT as optional will increasingly find themselves in front of procurement questionnaires, regulatory auditors, and after incidents, liability lawyers who understand the standards as well as they do.

The future of C: C23, new ISO directions, and the long game

C is not standing still. C23 (ISO/IEC 9899:2024, formally published in October 2024) is the most significant revision in over a decade, and several of its additions directly address the portability and safety concerns that drove MISRA and CERT in the first place.

nullptr and _BitInt(N)

A proper null pointer constant eliminates the NULL/0 ambiguity that has caused subtle bugs in type-generic code for decades. _BitInt(N) provides arbitrary-width integers — a direct answer to MISRA’s long-standing concern about implementation-defined integer sizes on 16-bit and 32-bit targets. You can now declare _BitInt(12) and get exactly 12 bits, portably.

typeof and auto

Type inference joins standard C. Used carefully, typeof can eliminate dangerous implicit conversions in macro-heavy embedded code, like the kind of macro gymnastics that previously forced engineers to choose between portability and readability. MISRA C may treat these cautiously in its next edition, but the direction is sound.

For example:

#define MAX(a, b) ((a) > (b) ? (a) : (b))

Looks harmless,

int a = -1;

unsigned int b = 1;

printf("%u\n", MAX(a, b));

but a gets implicitly converted to unsigned and comparision becomes unexpected.

Thats goes against MISRA

To protect against the implicit typedef conversion use typedef:

#define MAX(a, b) ({ \

typeof(a) _a = (a); \

typeof(b) _b = (b); \

_a > _b ? _a : _b; \

})

Why this helps:

- Each argument evaluated once

- Preserves original types

- Avoids unintended promotions So if you run the program again :

int a = -1;

unsigned int b = 1;

printf("%d\n", MAX(a, b));

Behavior now is predictable based on actual types stored in _a and _b

Standardised attributes

[[nodiscard]], [[deprecated]], and [[noreturn]] are now standard C, not GCC extensions. This reduces reliance on __attribute__ compiler-specific syntax — a portability win that MISRA has long advocated through its restrictions on implementation extensions. Your toolchain-agnostic attribute usage no longer requires a portability shim.

Checked integer arithmetic

ckd_add, ckd_sub, ckd_mul provide a standard way to detect integer overflow without invoking undefined behaviour. This addresses the single most common source of both MISRA violations and CERT-catalogued CVEs. There is no longer any excuse for unchecked arithmetic on values whose range you cannot statically guarantee.

MISRA C and C23 alignment

MISRA C 2023 was released before C23 finalised. Expect MISRA C 2026 or a technical corrigendum to address C23 constructs, particularly _BitInt, checked arithmetic, and the new attribute syntax.

The direction is towards tighter integration between language-level safety features and the rule sets that enforce their correct use.

Rust as a reference point

Rust is the most hyped programming language of 2025-26, driven by its compile-time memory-safety guarantees via ownership and lifetimes. These features are accelerating C standards work on bounded pointers (Annex V concepts) and lifetime annotations for verifiable static constraints. Rather than wholesale Rust ports, C is evolving ownership as native idioms. NSA’s 2022 guidance on memory-safe languages has pushed the C community toward language changes beyond mere coding practices

The question nobody is asking loudly enough

C has survived because nothing else reliably delivers deterministic performance, minimal runtime overhead, direct hardware access, and cross-compiler portability at scale. Rust is genuinely better at memory safety. It is not yet better at the full embedded development workflow , build system fragility, toolchain maturity for exotic targets (custom MCU’s), and the sheer mass of existing validated C codebases all slow adoption. That industry is changing, faster than most embedded teams realise.

“The future of safe C is not C that has learned to be Rust (Better memory safety guarantee). It’s C that has learned to be honest about its own undefined behaviour, and provides language-level tools to manage it.”

MISRA and CERT are documenting, systematically, the discipline that makes C survivable. C23’s checked arithmetic functions and _BitInt are the standard committee’s acknowledgment that MISRA and CERT identified real problems, and the language needed to respond.

For working embedded engineers, the practical takeaway is simple:

learn both standards. Not to satisfy auditors, but because they encode hard-won knowledge about how C actually behaves in production on constrained hardware.

The rules are not arbitrary. Every MISRA rule has a horror story behind it. Every CERT recommendation has a CVE attached.

Read the standards. Run the tools. And the next time someone tells you that portability doesn’t matter because “this is just embedded code” tell them the Ariane 5 telemetry story.

Further reading

- MISRA C 2023 — misra.org.uk

- CERT C Coding Standard — wiki.sei.cmu.edu/confluence/display/c

- C23 final draft (N3220) — open-std.org/jtc1/sc22/wg14

- Static analysis tooling — PC-lint Plus, Helix QAC (Perforce), Polyspace (MathWorks), Cppcheck with MISRA addon