Local First, Building Portable Embedded C++ pipeline with self-hosted CI

How Woodpecker CI, Cosmopolitan, and Google Pigweed give small firmware teams automated build-test-flash pipelines

In good DevOps practice, automation across building, testing, and releasing software plays a prominent role. CI (continuous integration) acts as a safety net: it takes raw materials (code), assembles them (builds), and quality-checks them (tests). If CI fails, the second half of the acronym (CD: continuous delivery/deployment) becomes too risky and can lead to serious production issues. There are several DevOps tools providing complete CI/CD services, but things get tricky while developing and testing embedded software.

The Problem Nobody Is Talking About

CI/CD is very stable in web and backend development. For embedded firmware, the picture is more uneven.

Some teams have real pipelines, which include tools like GitLab CI/CD runners that cross-compile, run QEMU simulations, flash a board over a self-hosted runner with USB access, collect serial logs, and push artifacts to a dashboard.

These setups work and they exist in production today. But they’re almost always custom-built, tricky to maintain, and tightly coupled to one specific board, one specific CI platform, and one team’s institutional knowledge. When the engineer or team who set it up leaves, the pipeline starts to rot.

At the other end, many small firmware teams have no CI at all, or a GitHub Actions workflow that cross-compiles and calls it a day. But logical bugs that a 10-second host simulation would have caught survive to hardware integration, where they take days to diagnose. Full regression testing is sometimes missing, so QA engineers have to do it manually.

The gap isn’t between “no CI” and “perfect CI.” It’s between ad-hoc, fragile setups and something that’s portable across development boards and OSes, self-hosted without cloud dependency (due to compliance requirements, aerospace, defense, and medical teams often resist pushing proprietary source code or massive firmware binaries to Microsoft or GitLab’s public cloud platforms), and structured enough that it doesn’t collapse when the individuals who set up the entire CI pipeline leave the team.

Hardware-in-the-loop (HIL) testing — the gold standard for embedded validation — sits at the top of this problem. It requires connecting your firmware to a system that simulates sensors, actuators, and real-world signals in real time. At the professional level, that means dSPACE, National Instruments VeriStand, or Vector CANoe — rigs costing $50,000 to $200,000 per bench, with dedicated test engineers to operate them.

In this blog post I propose a better way, and it costs nothing but engineering time to set up.

Three Tools, One Insight

The stack is Woodpecker CI + Cosmopolitan Libc + Google Pigweed. None of these tools were designed together, but they address complementary pain points:

Embedded teams don’t lack motivation for CI, they lack infrastructure that isn’t hardware-locked, OS-specific, or overly cloud-dependent. This stack is all three: hardware-agnostic, portable, and cloud-independent. While full analog HIL (simulating every complex electrical characteristic) may remain out of scope for small teams, the combination of these tools targets the automation gap. They move the needle from “manual laboratory testing” to automated on-target testing, allowing teams to catch bugs early in the CI/CD pipeline rather than at the very end of the V-Diagram

Here’s what each tool contributes:

| Tool | Role in the solution | Why it matters |

|---|---|---|

| Google Pigweed | The Embedded Framework | Pigweed is a collection of libraries designed for embedded development. It provides the hardware-agnostic C++ architecture layer by offering robust modules for unit testing, RPCs, and device abstraction that work the same on your host machine as they do on the microcontroller, collapsing the gap between simulation and real hardware testing. |

| Cosmopolitan Libc | The Portability Engine | This is the “secret sauce.” Cosmopolitan Libc allows you to compile C++ once into an Actually Portable Executable (APE) that runs natively on Linux, Mac, Windows, FreeBSD, and OpenBSD. This eliminates OS-specific barriers and makes build tools and test runners platform-independent. |

| Woodpecker CI | The Orchestrator | A self-hosted, lightweight container-based CI runner. Because it is easy to self-host, it eliminates cloud dependence. You can run it on a local server or even a Raspberry Pi. It keeps your IP and binaries on-premises, on your lab bench, with USB access to real hardware and no cloud dependency. |

flowchart TD

subgraph Dev OS

A[Developer PC <br>Win/Mac/Linux]

end

subgraph Cosmopolitan

B[cosmocc toolchain <br>One build binary runs anywhere]

end

subgraph Pigweed

C[Host Tests <br>pw_unit_test on x86]

D[Device Tests <br>pw_unit_test flashed to MCU]

end

subgraph Woodpecker CI

E[Self-Hosted Lab CI <br>Runs lab/bench hardware]

F[Compile Step]

G[Host Test Step]

H[Hardware Flashing & Testing]

end

A -->|"Uses"| B

B -->|"Builds"| C

A -->|"git push"| E

E -->|"Schedules"| F

F -->|"Triggers"| G

E -->|"Waits for USB Probe"| H

H -->|"Flashes to"| D

This stack is particularly potent because of how these three interact:

Eliminating “It works on my machine”: With Cosmopolitan, your build toolchain or test runner is a single binary that runs on any dev’s laptop and the CI runner without needing to manage complex dependencies or specific OS versions.

Local-First Development: Pigweed emphasizes that code should be testable on the host (your PC). You can run thousands of unit tests in seconds using Woodpecker before the code ever touches a physical chip.

Low Overhead: Unlike heavy enterprise CI suites, Woodpecker is tiny and fast. When paired with the efficiency of C++/Pigweed, your feedback loop (the time from “code save” to “test pass”) shrinks from minutes to seconds.

What HIL Testing Is, and Why It’s Broken

Hardware-in-the-loop testing works by connecting a real embedded control unit (ECU or MCU) to a system that electronically simulates the sensors and actuators it controls. The device runs actual production firmware. The surrounding environment is simulated. The result is a controlled, repeatable, automatable test environment that catches the class of bugs that only appear when software meets real electrical signals: timing violations, interrupt storms, ADC noise sensitivity, communication protocol edge cases.

HIL is mandatory in high-reliability fields like automotive, aerospace, and medical devices. The problem is structural: even teams that have HIL rigs struggle to integrate them into CI because:

- The rig is physically separate from the build system. Running tests means manually walking a firmware binary over to the rig, flashing it, and reading results off a proprietary GUI.

- Cloud CI has no concept of hardware. GitHub Actions runners don’t have USB ports, serial interfaces, or JTAG adapters.

- The validation gap. Software tests run on x86 hosts in simulation. Hardware tests run on ARM boards in the lab. These are separate activities, maintained by different people, with different test codebases. When simulation passes and hardware fails, diagnosis starts from zero.

- Toolchain portability. The cross-compiler that produces the firmware binary behaves differently across developer machines, creating “works on my machine” problems before you even reach the hardware.

This stack tackles the first three and a half of these problems. Full analog HIL remains out of scope, but the gap between “no testing” and “automated on-target testing” is where most small teams need help.

Getting Started

The recommended path to building this pipeline:

- Start with Pigweed’s Sense Showcase: Fork it and study how the facade/backend pattern is applied to a real project.

- Add pw_unit_test to one module: Pick a logic-heavy module in your current firmware, abstract the hardware, and write five host-side tests.

- Stand up Woodpecker CI: Run the Docker Compose file on a lab machine. Get host-side tests running in CI automatically.

- Build your Cosmopolitan runner: Compile your host-side test harness with

cosmoccand commit the binary to fix portability. - Attach a board to your Woodpecker agent: Configure

pw_target_runner, write a hardware-gated step, and run your first automated on-device test in CI.

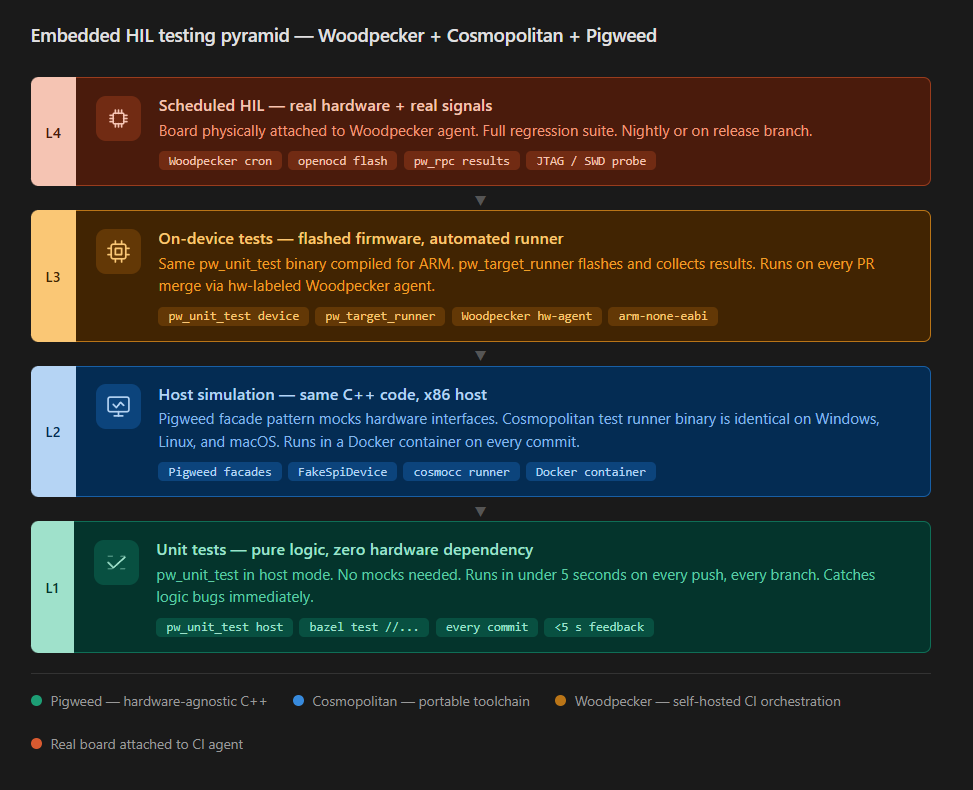

The Testing Pyramid This Stack Enables

The embedded testing pyramid has four levels. This stack covers the first three well and partially addresses the fourth:

Each level catches a different class of bug:

- Level 1 (Unit Tests): Pure logic, zero hardware dependency. Runs in < 5 seconds on any machine.

- What it does? Pigweed provides a framework for host-side unit tests that can run rapidly without needing physical hardware.

Why it matters? This aligns with the “Model-in-the-Loop” (MIL) and “Software-in-the-Loop” (SIL) stages described in the V-Diagram, where the controller and environment are simulated to catch logic errors early.

Level 2 (Host Simulation): Pigweed facade pattern — HAL mocked for host. Cosmopolitan test runner guarantees an identical environment on all OSes.

What it does? Pigweed is designed for “hermetic building,” meaning software is built in a completely isolated environment. This makes it less sensitive to the libraries, tools, or configurations installed on the host machine, ensuring the development environment is reproducible. Cosmopolitan Libc reinforces this by allowing C/C++ code to be “build-once run-anywhere,” providing an identical execution environment across Linux, Mac, Windows, and even BIOS.

Why it matters? Using Pigweed’s architecture (often utilizing a facade pattern for hardware abstraction layers) alongside Cosmopolitan ensures that host-side simulations are highly reliable and consistent across different developer operating systems.

Level 3 (On-Device Tests): Flashed firmware with automated test runner reporting over

pw_rpc. Run on every PR via Woodpecker hardware-attached agent.- What it does? Pigweed explicitly supports on-device unit tests and communication with hardware via

pw_rpc. Why it matters? Woodpecker CI provides the automation engine to trigger these tests. By using a “hardware-attached agent” (a common extensible use case for Woodpecker’s container-based pipelines), teams can automate the flashing and testing of firmware on every pull request.

Level 4 (Scheduled HIL): Triggered on main branch merges or nightly. Note: this level is where the gap between “on-target unit tests” and true HIL (with analog signal simulation) becomes important. This stack automates on-target testing well; full HIL with simulated physical signals still requires additional test equipment.

- What it does? The sources distinguish between simple on-target execution and true HIL, which requires “electrical emulation of sensors and actuators” (the plant simulation).

- Why it matters? While this stack automates the software side (on-target tests), the sources acknowledge that full HIL remains a “pivotal” and more complex stage involving mathematical representations of dynamic systems and specialized I/O interfaces. The stack effectively bridges the gap from “no testing” to “automated hardware testing.”

The key advance is that levels 1 and 2 now share the same C++ source code as levels 3 and 4. When a test passes on the host and fails on the device, you’ve isolated a genuine hardware-specific bug.

Pillar One: Pigweed — Write Once, Test Everywhere

Pigweed is Google’s open-source embedded C++ framework, battle-tested in Pixel phones, Nest thermostats, DeepMind robots, and satellites. Its central architectural contribution is the facade/backend pattern.

The Facade Pattern in Practice

Every hardware interaction — SPI, GPIO, UART, I2C, a timer — is expressed as a C++ abstract class. In firmware, you instantiate the real backend that talks to hardware registers. In host simulation, you instantiate a mock backend that logs calls, returns scripted values, or asserts on call sequences. The business logic never changes.

// Your sensor driver — hardware-agnostic

class PressureSensor {

public:

explicit PressureSensor(pw::spi::Device& spi) : spi_(spi) {}

pw::Result<float> ReadPressurePa() {

// Reads over SPI — works on host (mock SPI) and on STM32 (real SPI)

std::array<std::byte, 3> response;

PW_TRY(spi_.Read(response));

return ParsePressureBytes(response);

}

private:

pw::spi::Device& spi_;

};

On the host, spi_ is a FakeSpiDevice that returns pre-programmed byte sequences. On an STM32 board, it’s a real SPI peripheral backed by DMA. The test for ReadPressurePa() runs identically in both environments.

pw_unit_test: One Test Binary, Two Targets

Pigweed’s unit test framework is GoogleTest-compatible but uses no dynamic memory allocation — it runs on bare-metal MCUs. The same test source compiles to an x86 binary for your CI container and an ARM binary for your board:

TEST(PressureSensorTest, ReturnsCorrectPressureForKnownBytes) {

FakeSpiDevice fake_spi({0x00, 0x67, 0x1A});

PressureSensor sensor(fake_spi);

auto result = sensor.ReadPressurePa();

ASSERT_TRUE(result.ok());

EXPECT_NEAR(result.value(), 101325.0f, 1.0f);

}

This test catches logic bugs at Level 1. When it also passes on the device at Level 3, you’ve confirmed hardware integration.

pw_watch and pw_target_runner: Automated On-Device CI

pw_watch monitors your source tree and, on file save, compiles only the affected tests and flashes them to an attached device. pw_target_runner can distribute this across multiple boards running in parallel — the embedded equivalent of parallelized CI workers.

In a Woodpecker pipeline, this becomes:

# In a hardware-gated Woodpecker workflow file:

steps:

- name: device-tests

image: ubuntu:22.04

commands:

- pw_target_runner_client -host localhost -port 8080 -binary build/test_binary.elf

volumes:

- /dev/ttyACM0:/dev/ttyACM0 # The attached board

Pillar Two: Cosmopolitan — The Toolchain Portability Problem

There’s a subtler problem that Pigweed alone doesn’t solve: your build tools themselves aren’t portable. A Python script that parses firmware map files on your Ubuntu CI machine fails on a Windows developer’s laptop because of path separator differences. These mismatches create builds that are “reproducible” in theory and brittle in practice.

Cosmopolitan Libc addresses this for host-side tooling. It reconfigures GCC and Clang to produce Actually Portable Executables (APEs), polyglot binaries that run natively on Linux, macOS, Windows, FreeBSD, and NetBSD on both x86-64 and ARM64 without recompilation.

What This Means for an Embedded Pipeline

Your host-side test infrastructure, the binary that runs pw_unit_test results on the host, the serial port listener that collects device test output, the log parser and the code coverage reporter, all of these can all be built with cosmocc instead of cc.

The resulting binaries are checked into the repository and work identically everywhere.

A new team member on Windows clones the repo, runs ./tools/run_host_tests, and gets the same results as the Linux CI agent. No Docker required for local development.

# Build the host test runner once with cosmocc

cosmocc -o tools/run_host_tests src/test_runner.c

# Now this binary works on:

# - Ubuntu

# - macOS

# - Windows 11 (native, not WSL)

# - Raspberry Pi 5 lab bench server (ARM64)

Check Your Scope The firmware binary itself is still compiled with arm-none-eabi-gcc. Cosmopolitan is for your development tooling, not for the bare-metal MCU. Also note that Cosmopolitan ships its own C++ standard library (ctl/) rather than libstdc++, so complex C++ code with heavy template metaprogramming or bleeding-edge C++20/23 features may hit compatibility gaps. For simple CLI tools and test runners, this is rarely an issue.

Pillar Three: Woodpecker CI — Self-Hosted, Hardware-Attached, Free

GitHub Actions and GitLab CI aren’t built for embedded firmware: their runners are ephemeral cloud VMs with no physical I/O. You can’t attach a JTAG probe, a USB-serial adapter, or a power relay to a cloud runner.

Woodpecker CI runs on your hardware. The server is a lightweight Go binary that talks to your Git forge. Agents — the workers that execute pipeline steps — run wherever you need them. One agent on a spare laptop in the lab bench has access to every USB device connected to that machine.

The Agent Model for Hardware

Woodpecker’s edge for embedded CI is its agent label system. You label an agent hw-stm32h750 and restrict workflows to that agent — the machine where the board is physically connected.

Woodpecker routes entire workflow files to agents via labels — not individual steps. So you split your pipeline across multiple workflow files:

# .woodpecker/build-and-host.yaml

# Runs on any agent (no hardware needed)

steps:

- name: build

image: gcc-arm:13

commands:

- cmake -B build -DTARGET=stm32h750 -DCMAKE_BUILD_TYPE=Release

- cmake --build build -j$(nproc)

- name: host-tests

image: ubuntu:22.04

commands:

- ./tools/run_host_tests # Cosmopolitan binary, works anywhere

- name: lint-and-presubmit

image: ubuntu:22.04

commands:

- pw presubmit --step pragma_once --step clang_format --step cpp_checks

# .woodpecker/device-tests.yaml

# Routed to the agent with the board attached

labels:

hardware: stm32h750

when:

- branch: main

event: push

steps:

- name: flash-and-device-tests

image: ubuntu:22.04

commands:

- openocd -f board/stm32h750b-disco.cfg -c "program build/firmware.elf verify reset exit"

- pw_target_runner_client -host localhost -port 8080 -binary build/test_binary.elf

# .woodpecker/hil-nightly.yaml

# Nightly regression on the hardware agent

labels:

hardware: stm32h750

when:

- event: cron

cron: nightly

steps:

- name: hil-regression

image: ubuntu:22.04

commands:

- python3 tools/hil/run_regression_suite.py --port /dev/ttyACM0

The build and host-test workflow runs on every commit — no hardware needed. The device-test workflow triggers on merges to main, routed to the lab agent with the board attached. The HIL regression runs nightly.

Why Not GitHub Actions with Self-Hosted Runners?

Woodpecker has three major advantages for the specific embedded use case:

- Air-gap friendly: It has no dependency on the cloud. In defense or medical devices, traffic to github.com is often prohibited. Woodpecker works fully offline.

- Minimal overhead: The server uses a SQLite database and can run comfortably on a Raspberry Pi 5 with ~100MB of RAM.

- Hardware declarations: The agent label mechanism is straightforward for routing hardware-gated workflows, though note that labels apply at the workflow level, not per-step. You’ll need separate workflow files for cloud-only and hardware-attached steps.

Where Host Tests Meet Hardware

The core payoff of this stack is narrowing the gap between what passes in simulation and what works on real silicon. It doesn’t eliminate that gap, hardware surprises are inherent to the domain but it makes the gap visible and diagnosable instead of something you discover at system integration.

Without this kind of setup, hardware surprises tend to surface during system integration which can take weeksm that will escalate the cost of diagnosis.

What This Stack Actually Gets You (and What It Doesn’t)

Before overselling this: running pw_unit_test on a board via a USB probe is not the same as what the industry calls HIL testing.

A real HIL rig simulates analog sensor signals, controls power rails, injects noise, and emulates bus traffic with precise timing. Plugging an STM32 into a Raspberry Pi and running unit tests over serial is closer to on-target testing — valuable, but a different animal.

This stack also doesn’t address:

- Analog signal simulation. If your firmware reads a 4–20 mA current loop, you still need a DAC or signal generator to produce that signal in a test.

- Environmental testing. Temperature chambers, EMC testing, and vibration are outside the scope of any CI pipeline.

- Compliance certification. ISO 26262 (automotive), IEC 62304 (medical), and DO-178C (aerospace) all require specific evidence and traceability that a YAML pipeline alone doesn’t provide.

- Mock fidelity. The mock backends you write for host testing are only as good as your understanding of the hardware. If you mock away the very behavior that causes the bug, the test is green and useless.

What this stack does get you: a free, self-hosted pipeline that automates the dull parts — compiling, running logic tests on the host, flashing firmware, and collecting results — so your limited engineering hours go toward the hard problems instead of manual build-flash-check cycles.

For a firmware team of 3–8 engineers at a robotics startup, an industrial IoT company, or a hardware startup that doesn’t have a $100K test rig budget. Also were the team are working on different OS, and containerization is a necessity.

Resources

- Pigweed documentation — start with the Tour of Pigweed and the Sense showcase

- Pigweed SDK announcement (Google, Aug 2024)

- Cosmopolitan Libc — cosmocc toolchain and APE format

- Woodpecker CI — documentation and Docker Compose quick start

- Golioth Firmware SDK HIL testing — a detailed real-world HIL CI implementation worth studying